Mizzeto offers a comprehensive suite of healthcare services designed to streamline payer back office opreations.

Business Process Automation

Optimizing Healthcare Operations with BPO and Automation for Faster, Smarter Service Delivery.

Accelerate results in utilization management, claims configuration, and beyond with BPO solutions designed for payers. Whether you need end-to-end support or targeted assistance, our flexible solutions adapt to your needs without slowing you down.

Accelerate claims adjudication with intelligent automation and expert handling of exceptions—driving accuracy, speed, and savings.

Ensure accurate benefit interpretation with rules-based configuration that minimizes errors and audit risk.

Optimize enrollment and billing with automated, compliant processes that enhance member experience, minimize errors, and accelerate onboarding and revenue capture.

Eliminate claim delays and costly errors with streamlined provider data management and credentialing processes.

Unlock hidden revenue with accurate payment reconciliation and faster provider recovery workflows

Digitize inbound mail with high-accuracy data capture to accelerate processing and streamline workflows.

Deliver high-quality member support through scalable contact center teams trained in payer-specific needs, ensuring personalized service while efficiently managing fluctuating call volumes.

Ensure data accuracy and regulatory compliance with rigorous transactional audits that uphold quality and reduce risk.

Empower strategic decisions with custom reports and interactive dashboards delivering actionable insights.

Our payer solutions provide a range of client benefits including: streamline operations, cost reductions, and overall customer satisfaction. Our global team allows us to enable faster claims processing, accurate data analysis, and enhanced decision-making without increasing client costs.

Mizzeto accelerates prior authorization decisions by up to 40% through intelligent intake, automated data validation, and payer-specific rules configuration. This results in faster treatment initiation and improved provider satisfaction.

Mizzeto improves member enrollment turnaround times by streamlining and fully automating the process. This can be through customized system configuration, reduction in enrollment file errors, and workflow automations.

For every 3% lift in claims auto-adjudication rate, clients have the potential to save up to $250K annualy.

Healthcare operations are complex, fragmented, and buried in manual work. Mizzeto’s automation suite plugs into your existing platforms—like QNXT, Facets, and Epic—without forcing a rip-and-replace.

SLAs aligned to your KPIs, with real-time reporting, performance guarantees, and built-in scalability during peak volumes

Utilize Gen AI to make data-driven decisions and predictions

.svg)

Optimize workflows and reduce errors with our automation suite

Manage provider data through automating workfows

Minimize provider overpayments using pre-adjudication auditing

Increase auto-adjudication rates through RPA automation

The rapid acceleration of AI in healthcare has created an unprecedented challenge for payers. Many healthcare organizations are uncertain about how to deploy AI technologies effectively, often fearing unintended ripple effects across their ecosystems. Recognizing this, Mizzeto recently collaborated with a Fortune 25 payer to design comprehensive AI data governance frameworks—helping streamline internal systems and guide third-party vendor selection.

This urgency is backed by industry trends. According to a survey by Define Ventures, over 50% of health plan and health system executives identify AI as an immediate priority, and 73% have already established governance committees.

However, many healthcare organizations struggle to establish clear ownership and accountability for their AI initiatives. Think about it, with different departments implementing AI solutions independently and without coordination, organizations are fragmented and leave themselves open to data breaches, compliance risks, and massive regulatory fines.

AI Data Governance in healthcare, at its core, is a structured approach to managing how AI systems interact with sensitive data, ensuring these powerful tools operate within regulatory boundaries while delivering value.

For payers wrestling with multiple AI implementations across claims processing, member services, and provider data management, proper governance provides the guardrails needed to safely deploy AI. Without it, organizations risk not only regulatory exposure but also the potential for PHI data leakage—leading to hefty fines, reputational damage, and a loss of trust that can take years to rebuild.

Healthcare AI Governance can be boiled down into 3 key principles:

For payers, protecting member data isn’t just about ticking compliance boxes—it’s about earning trust, keeping it, and staying ahead of costly breaches. When AI systems handle Protected Health Information (PHI), security needs to be baked into every layer, leaving no room for gaps.

To start, payers can double down on essentials like end-to-end encryption and role-based access controls (RBAC) to keep unauthorized users at bay. But that’s just the foundation. Real-time anomaly detection and automated audit logs are game-changers, flagging suspicious access patterns before they spiral into full-blown breaches. Meanwhile, differential privacy techniques ensure AI models generate valuable insights without ever exposing individual member identities.

Enter risk tiering—a strategy that categorizes data based on its sensitivity and potential fallout if compromised. This laser-focused approach allows payers to channel their security efforts where they’ll have the biggest impact, tightening defenses where it matters most.

On top of that, data minimization strategies work to reduce unnecessary PHI usage, and automated consent management tools put members in the driver’s seat, letting them control how their data is used in AI-powered processes. Without these layers of protection, payers risk not only regulatory crackdowns but also a devastating hit to their reputation—and worse, a loss of member trust they may never recover.

AI should break down barriers to care, not build new ones. Yet, biased datasets can quietly drive inequities in claims processing, prior authorizations, and risk stratification, leaving certain member groups at a disadvantage. To address this, payers must start with diverse, representative datasets and implement bias detection algorithms that monitor outcomes across all demographics. Synthetic data augmentation can fill demographic gaps, while explainable AI (XAI) tools ensure transparency by showing how decisions are made.

But technology alone isn’t enough. AI Ethics Committees should oversee model development to ensure fairness is embedded from day one. Adversarial testing—where diverse teams push AI systems to their limits—can uncover hidden biases before they become systemic issues. By prioritizing equity, payers can transform AI from a potential liability into a force for inclusion, ensuring decisions support all members fairly. This approach doesn’t just reduce compliance risks—it strengthens trust, improves engagement, and reaffirms the commitment to accessible care for everyone.

AI should go beyond automating workflows—it should reshape healthcare by improving outcomes and optimizing costs. To achieve this, payers must integrate real-time clinical data feeds into AI models, ensuring decisions account for current member needs rather than outdated claims data. Furthermore, predictive analytics can identify at-risk members earlier, paving the way for proactive interventions that enhance health and reduce expenses.

Equally important are closed-loop feedback systems, which validate AI recommendations against real-world results, continuously refining accuracy and effectiveness. At the same time, FHIR-based interoperability enables AI to seamlessly access EHR and provider data, offering a more comprehensive view of member health.

To measure the full impact, payers need robust dashboards tracking key metrics such as cost savings, operational efficiency, and member outcomes. When implemented thoughtfully, AI becomes much more than a tool for automation—it transforms into a driver of personalized, smarter, and more transparent care.

An AI Governance Committee is a necessity for payers focused on deploying AI technologies in their organization. As artificial intelligence becomes embedded in critical functions like claims adjudication, prior authorizations, and member engagement, its influence touches nearly every corner of the organization. Without a central body to oversee these efforts, payers risk a patchwork of disconnected AI initiatives, where decisions made in one department can have unintended ripple effects across others. The stakes are high: fragmented implementation doesn’t just open the door to compliance violations—it undermines member trust, operational efficiency, and the very purpose of deploying AI in healthcare.

To be effective, the committee must bring together expertise from across the organization. Compliance officers ensure alignment with HIPAA and other regulations, while IT and data leaders manage technical integration and security. Clinical and operational stakeholders ensure AI supports better member outcomes, and legal advisors address regulatory risks and vendor agreements. This collective expertise serves as a compass, helping payers harness AI’s transformative potential while protecting their broader healthcare ecosystem.

At Mizzeto, we’ve partnered with a Fortune 25 payer to design and implement advanced AI Data Governance frameworks, addressing both internal systems and third-party vendor selection. Throughout this journey, we’ve found that the key to unlocking the full potential of AI lies in three core principles: Protect People, Prioritize Equity, and Promote Health Value. These principles aren’t just aspirational—they’re the bedrock for creating impactful AI solutions while maintaining the trust of your members.

If your organization is looking to harness the power of AI while ensuring safety, compliance, and meaningful results, let’s connect. At Mizzeto, we’re committed to helping payers navigate the complexities of AI with smarter, safer, and more transformative strategies. Reach out today to see how we can support your journey.

Feb 21, 2024 • 2 min read

On April 2, 2026, CMS finalized the Contract Year 2027 Medicare Advantage and Part D rule1, and the changes will reshape how health plans earn, protect, and lose revenue for years to come. CMS finalized the removal or retirement of 11 Star Ratings measures, declined to implement the Health Equity Index reward, added a new Depression Screening and Follow-Up measure, codified the Inflation Reduction Act's Part D benefit redesign, tightened supplemental benefit oversight, updated call recording retention requirements, and scaled back several health equity provisions. The agency received over 42,000 public comments on the proposed rule.2

The financial stakes are significant. According to CMS projections published in the final rule, the Star Ratings changes are estimated to have a net impact of approximately $18.6 billion on the Medicare Trust Fund over the 2027 to 2036 period.3 Independent actuarial estimates, including analyses from Milliman and Wakely Consulting, suggest roughly 25% of contracts could lose half a Star, with at least 42 contracts potentially falling below the 4.0 threshold that determines quality bonus payments.4 Industry analyses from Press Ganey and others estimate that by 2029, CAHPS and HOS survey measures could account for nearly 40% of total Star weight5, meaning the administrative measures that padded most plans' ratings are gone, and member experience is now the primary financial driver.

The rule touches nearly every operational function in a health plan. The Star Ratings overhaul is the headline: CMS finalized the removal or retirement of 11 measures, many of which were considered topped-out administrative or process measures with little performance variation across plans. CMS retained the Diabetes Care Eye Exam measure after comment period feedback, and added a new Depression Screening and Follow-Up measure for the 2027 measurement year (reflected in 2029 Stars). CMS also declined to implement the Health Equity Index reward, retaining the historical reward factor instead.

Beyond Stars, CMS codified the IRA's Part D benefit redesign into permanent regulation: the coverage gap is eliminated, a $2,000 annual out-of-pocket cap is in place, and catastrophic phase cost sharing is zero. CMS strengthened supplemental benefit oversight, including requiring that debit cards used for SSBCI be electronically linked to plan-covered items through an identification mechanism at the point of sale. CMS also updated call recording retention requirements, reducing the overall retention period for marketing and sales calls from 10 to six years with a tiered structure. Separately, documentation supporting coverage determinations must be retained in original format, including audio files; CMS has indicated that failure to produce original-format documentation may result in adverse audit findings, including potential PDE record adjustments.6 CMS also loosened marketing rules (eliminating the 48-hour SOA waiting period and the 12-hour gap between educational and marketing events), scaled back or deferred several health equity provisions for QI programs and UM Committees, and rescinded the mid-year supplemental benefit notice mandate.

Table 1: CY2027 Star Ratings Measure Changes

CAHPS Now Drives the Revenue Equation

With industry estimates projecting survey measures could approach 40% of total Star weight by 2029, every member interaction that feeds a CAHPS response carries direct financial consequence. CAHPS measures getting needed care, getting appointments quickly, customer service quality, and health plan information. Each maps directly to call center performance: how quickly a member reaches a knowledgeable agent, whether the issue was resolved on the first call, and whether the member felt the plan gave them the information they needed.

Plans that treated member experience as secondary to clinical gap closure need to reverse that calculus. The AHA raised concerns during the comment period that CAHPS is high-level and lagged7, which underscores the operational problem: by the time CAHPS data reveals an issue, the damage spans months of interactions. Plans need real-time quality intelligence, not annual survey results, to manage at the speed CMS now requires.

The Language Access Measure Is Gone. The Requirement Is Not.

CMS removed the Call Center Foreign Language Interpreter and TTY Availability measure from Stars, effective 2028. But CMS will continue enforcing language access through compliance mechanisms, and member experience with language access will be captured through CAHPS survey questions.8 When language access was a binary, pass-fail administrative measure, plans met the standard by having an interpreter line available. Now it is measured through member experience surveys. The bar shifts from availability to quality. Did the Spanish-speaking member feel heard? Was the Mandarin-speaking member's question actually resolved? Multilingual quality monitoring becomes more important under this rule, not less.

Complaints, Retention, and the Signals You Are About to Lose

The Complaints about the Health Plan and Drug Plan measures are both removed, as is Members Choosing to Leave the Plan. Plans used these as governance signals for grievance operations and retention. Their removal from Stars does not mean CMS stops watching; these will likely continue as display measures and compliance enforcement tools. Complaint trends remain among the strongest leading indicators of CAHPS deterioration. And every lost member still represents lost premium revenue. The difference now is that plans lose the early warning signals. The QA system that monitors member interactions must compensate by surfacing complaint trends and churn risk from interaction data, feeding quality insights directly into retention strategy.

Depression Screening Creates a Member Services Coordination Challenge

The new Depression Screening and Follow-Up measure evaluates two rates: the percentage of eligible members screened, and the percentage who receive follow-up care within 30 days of a positive screen. The screening rate depends on clinical workflows. The follow-up rate depends on member services infrastructure: outreach, appointment scheduling, and confirmation. Plans that silo this as a purely clinical initiative will underperform.

Part D Benefit Changes Will Hit the Phones

The codified three-phase benefit structure (deductible, initial coverage, catastrophic) replaces the four-phase model members have known for years. The $2,000 out-of-pocket cap is the most significant Part D financial protection in a generation, but it requires agents to explain new cost-sharing mechanics accurately. Members will call about why their coverage gap disappeared, what counts toward TrOOP, and what happens at the OOP threshold. Every call center needs updated knowledge base content, retrained agents, and revised IVR scripts before October 2026. Benefit misinformation during AEP is one of the fastest paths to CAHPS degradation.

Debit Card Declines Will Become Call Volume

CMS strengthened SSBCI debit card requirements, including that cards be electronically linked to plan-covered items through an identification mechanism at the point of sale. In practice, this means tighter verification when members use flex cards. When a member's card is declined at a store because a specific item does not qualify, the next action is a phone call. Member services teams should anticipate a new category of inbound inquiries. Plans that do not prepare agent scripts and escalation workflows will see resolution times spike and CAHPS-relevant frustration increase.

Appeals Measures Gone: BPO Accountability Gap Widens

Two appeals measures are removed: Plan Makes Timely Decisions about Appeals and Reviewing Appeals Decisions. For plans outsourcing appeals to BPO vendors, this eliminates one of the few externally visible accountability signals on that process. Plans must build internal SLA monitoring to ensure outsourced operations maintain standards. The risk is not a Star Rating drop; it is a CMS compliance finding.

Health Equity Provisions Scaled Back: The Mandate Is Gone. CAHPS Is Not.

CMS scaled back or deferred several health equity provisions in this rule: the HEI reward was not implemented, QI program disparity reduction requirements were removed, UM Committee equity expert and analysis mandates were eliminated, and the supplemental benefit notice was rescinded. For plans serving significant dual-eligible or LEP populations, the regulatory pressure is reduced but the operational reality is unchanged. Experience disparities still surface in CAHPS. Voluntarily maintaining equity-focused quality monitoring, particularly multilingual QA, is not compliance theater. It is CAHPS protection.

Table 2: CY2027 Final Rule Operational Impact Matrix

When evaluating solutions, health plan leaders should look for these capabilities:

100% interaction monitoring, not sampling. With CAHPS and survey measures carrying increasingly dominant weight in Star Ratings, sampling-based QA cannot identify the systemic patterns that drive survey responses.

Multilingual quality scoring at native-language fidelity. With language access measurement shifting to CAHPS, multilingual QA must be integrated into the same framework applied to English interactions.

Real-time coaching signals. CAHPS is lagged. Quality intelligence must surface coaching opportunities within hours, not quarters.

CAHPS-aligned scoring frameworks. The QA scorecard must mirror what CMS measures: getting needed care, customer service, getting appointments, and health plan information.

Complaint and churn early warning. With complaint and disenrollment measures removed, the QA platform must surface these signals from interaction data.

Plan-owned data and analytics. If your quality data lives inside a vendor's platform, you do not own your operational intelligence. That intelligence must belong to the plan.

Mizzeto's Multilingual QA Solution was built for this inflection point: AI-powered quality monitoring across 100% of member interactions, in multiple languages, with CAHPS-aligned scoring, real-time coaching signals, and complaint and churn analytics. All data stays in the plan's hands. For more on connecting these capabilities to call center performance, see our guides on improving call center performance and modernizing call center operations.

The rule is effective June 1, 2026. Marketing begins October 1. Coverage starts January 1, 2027. The 2027 measurement year, which feeds 2029 Star Ratings, will be the first scored under the new CAHPS-heavy measure set. Plans that retool now have time. Plans that wait for 2029 ratings to reveal a problem will discover it started in 2027.

1. CMS, 'Contract Year 2027 Medicare Advantage and Part D Final Rule' Fact Sheet, April 2, 2026.

2. Federal Register, CMS-4208-F3/CMS-4212-F, published April 6, 2026.

3. CMS Final Rule financial projections; Becker's Hospital Review, April 2, 2026.

4. Milliman, 'Falling Star Rating Trajectory,' December 2025. Healthcare Labyrinth, April 2026.

5. Press Ganey, December 2025. Upward Growth, April 2026. Healthcare Labyrinth corroborates.

6. AArete, 'Reading the Signals,' December 2025.

7. American Hospital Association, Comment Letter on CY 2027 Proposed Rule, January 26, 2026.

8. Holland & Knight, April 2026. Crowell & Moring, December 2025.

Jan 30, 2024 • 6 min read

Most health plan operations leaders can tell you their average handle time and their cost per call. Very few can tell you what a single transferred call actually costs when you follow it all the way through the system.

That transferred call triggers a second interaction at $4.90 or more.[1] It resets the resolution clock. It inflates the member’s frustration, which showsup months later in a CAHPS survey the plan cannot retroactively fix. And if the plan is running a Medicare Advantage contract, that CAHPS score is tied directly to Star Ratings, which determine quality bonus payments worth tens of millions in annual revenue.[2]

The real problem is not the cost of one bad call. It is that the way most health plans measure call center quality today was designed for a different era, and it is structurally incapable of seeing how many bad calls are happening, or why.

First call resolution is the most important metric in any health plan contact center. SQM Group’s benchmarking across more than 100 leading North American healthcare call centers puts the industry average FCR rate at 71%. Only 4% of those centers reach the world-class threshold of 80% or higher.[3]

That means roughly 29% of member calls require a callback, transfer, or follow-up. In some studies, the number is far worse: one analysis found the average healthcare FCR rate sitting at 52%, meaning more than half of all member inquiries go unresolved on first contact.[4]

Each of those unresolved calls carries a compounding cost. SQM Group’s research shows that a 1% improvement in FCR translates to approximately $286,000 in annual operational savings for a typical midsize call center.[5] That is not a theoretical model. That is reduced repeat volume, shorter queues, and lower agent workload.

Now consider the member experience side. Satisfaction drops roughly 15% every time a member has to call back about the same issue.[6] The call that started as a routine benefits question becomes, by the third attempt, a complaint. And complaints have an FCR rate of just 47%.[7]

Healthcare call centers face transfer rates as high as 19%.[8] Each transfer does three expensive things simultaneously.

First, it adds direct cost. A transferred call requires a second agent, a second set of minutes, and often a longer total handle time than a single well-routed interaction. With average handle times running 6.6 minutes and average costs at $4.90 per call, a transferred call effectively doubles the expense of that member interaction.

Second, it destroys member confidence. Talk desk’s survey of 330 health plan members found that 78% described their experience with their insurers as less than seamless. The leading cause was not claims denials or billing errors. It was poor customer service, cited by 31% of respondents.[9] Being transferred between departments and repeating the same information is the archetype of that frustration.

Third, and most overlooked, transfers create data fragmentation. When a call moves fromone agent to another, the wrap-up codes, disposition notes, and resolution status become inconsistent. The first agent may mark the call as resolved because they transferred it. The second agent may not log the original call reason. The result is that the plan’s reporting shows two “handled” calls instead of one unresolved member issue.

Many of these transfers are not agent errors. They are routing failures: an IVR that sends a prior authorization status call to a general benefits queue, or a system that cannot identify a member’s preferred language and routes them to an English-only agent by default. These are infrastructure and configuration problems that compound silently across thousands of calls.

Here is where the structural problem becomes clear.

The traditional approach to call center quality assurance, whether run in-house or through an outsourced partner, reviews between 2% and 5% of total interactions. In many operations, the number sits closer to 2%.[10] That means 95% or more of member calls are never evaluated by anyone.

The math alone makes the approach statistically indefensible. A 3% random sample of 800,000 annual calls captures 24,000 interactions. If 232,000 of those calls are repeat contacts, the sample will catch only a small fraction of them, and it will almost never catch the systemic patterns that cause them.

The deeper issue is not just sample size. It is what the QA program is designed to measure. Most legacy QA scorecards evaluate whether an agent followed a script, greeted the member properly, and used compliant language. They do not measure whether the member’s issue was actually resolved, whether the call could have been prevented by better routing, or whether the same question has been asked 500 times this month because a benefit change was poorly communicated.

When quality measurement is limited to agent-level compliance on a tiny sample, the operational problems that drive repeat calls, unnecessary transfers, and member dissatisfaction remain invisible. QA scores can look strong while member experience deteriorates, because the scorecard and the member’s reality are measuring different things.

For Medicare Advantage plans, this is not just an operational inconvenience. It is a revenue problem measured in tens of millions.

CAHPS survey results have historically carried a 4x weight in CMS Star Ratings calculations. While the weighting shifted to 2x for Star Year 2026, CAHPS measures remain a significant driver of overall ratings. CMS’s proposed rules for 2027 and beyond signal that member experience will become an even larger share of the total score, with CAHPS and HOS projected to make up nearly 40% of total Star weight by 2029.[11]

The financial stakes are hard to overstate. The gap between a 3.5-star and a 4+ star plan can translate to tens of millions of dollars in annual quality bonus payments. In 2026, only about 40% of MA-PD contracts achieved 4 stars or higher, the lowest proportion in over five years.[12]

Every repeat call, every unnecessary transfer, every escalation that leaves a member frustrated is a data point that can move CAHPS scores. A plan cannot fix a bad call center experience with a follow-up mailer.

Consider amid-size Medicaid managed care plan handling 800,000 member calls per year. At a 71% FCR rate, roughly 232,000 of those calls require a repeat contact. At $4.90 per call, the repeat volume alone represents more than $1.1 million indirect costs annually, and that does not account for the extended handle times, supervisor escalations, or member complaints those calls generate.

Now suppose the plan’s QA program reviews 3% of calls. That is 24,000 calls reviewed out of 800,000. The 232,000 repeat interactions? They are almost entirely invisible, because repeat calls do not cluster conveniently in a random 3% sample.

The plan sees a QA dashboard that shows 90%+ compliance scores. The quality team reports stable performance. Meanwhile, CAHPS scores are flat or declining, member complaints are rising, and the CX team cannot pinpoint why.

This is not a failure of the people doing the work. It is a failure of the measurement infrastructure. The plan is making decisions based on what 3% of its interactions reveal, while the other 97% contain the signals that actually explain member experience.

One of the most overlooked drivers of call center inefficiency in health plans is language access. Medicaid and dual-eligible populations frequently include members whose primary language is not English. When these members reach an agent who cannot serve them in their preferred language, the result is almost always a transfer, extended hold time, or an unresolved interaction.

CMS requires that Medicare Advantage and Medicaid managed care plans provide meaningful language access. But compliance is often measured at the policy level, not the interaction level. A plan may have interpreter services available, but if the routing logic does not match members to bilingual agents and QA does not evaluate non-English interactions, language-related service failures become invisible in aggregate metrics.

This matters because the members most affected are often the most vulnerable: elderly, disabled, low-income, or limited English proficient populations whose CAHPS responses carry the same weight as every other member’s. A plan that underserves this segment is not just creating an equity gap. It is creating a Star Ratings exposure that shows up 12 to 18 months later in the measurement cycle.

The answer is not to bring everything in-house or to stop working with operational partners. The answer is to modernize how quality is measured, who owns the data, and what the plan can actually see. Whether your call center is in-house, outsourced, or hybrid, these capabilities separate plans that manage costs from plans that manage outcomes.

100% interaction monitoring, not sampling. Any quality program that evaluates only a fraction of calls will always miss the patterns that drive repeat contacts and member dissatisfaction. AI-powered monitoring across voice, chat, and digital channels is now operationally viable and should be the baseline expectation.

Multilingual QA that matches the member population. If your plan serves Medicaid or Medicare Advantage populations, quality monitoring must cover non-English interactions with the same rigor as English calls. This means native-language evaluation, not post-hoc translation of transcripts.

Plan-owned quality measurement. Regardless of who operates the call center, the plan should own the quality data. When quality measurement is controlled entirely by the team handling the calls, there is no independent check on whether reported performance matches member reality.

Root-cause analytics, not just scorecards. A QA score tells you whether an agent followed a script. It does not tell you why members are calling back, which call types drive the most transfers, or where routing logic is failing. Modern QA surfaces the operational signals behind the numbers.

Direct linkage to CAHPS and Star Ratings strategy. Call center performance and Star Ratings are not separate workstreams. Quality data from member interactions should feed directly into Stars strategy, giving plans the ability to intervene before CAHPS surveys go into the field.

Operational intelligence, not just compliance reporting. The goal is not a cleaner scorecard. It is the ability to see which processes are broken, which member segments are at risk, and which changes will move the metrics that matter.

Mizzeto’s Multilingual QA Solution was built to give health plans 100% visibility into call center quality across every language their members speak. Rather than relying on sampling or siloed scorecards, the platform uses AI to monitor and score every member interaction, surfacing the compliance risks, service failures, and repeat-call drivers that legacy QA methods cannot detect. Whether your call center is in-house, outsourced, or a combination, Mizzeto puts quality oversight and operational intelligence back in the hands of the plan.

The most expensive call in your contact center is not the one that takes 12 minutes. It is the one that generates three more calls, a formal complaint, and a CAHPS response that pulls your Star Rating below the bonus threshold.

Health plans have spent years optimizing the visible costs: average handle time, headcount, per-call rates. The invisible costs, the ones hiding in the 95% of calls nobody reviews, are where the real money is. The plans that figure this out first will not just run more efficient call centers. They will have a structural advantage in Star Ratings, member retention, and the ability to make operational decisions based on what is actually happening.

The call center is not a cost center to be minimized. It is an intelligence asset to be owned.

[1]DialogHealth, “Latest Healthcare Call Center Statistics,” 2025.

[2]Ameridial, “Health Plan Member Services Outsourcing for Star Ratings,” 2026.

[3]SQM Group, “Why FCR Matters to Healthcare Insurance Call Centers.”

[4]Physicians Angels, “Healthcare Call Center Statistics To Know,” 2025.

[5]Talkdesk, “How Payers Can Improve Member Experience with Modern Contact Centers.”

[6]TheAIQMS, “AI QMS for BPO: Scaling Contact Center Quality Without Expanding QA Teams,” 2025.

[7]Enthu.ai, “Call Center Quality Assurance,” 2026.

[8]Press Ganey, “CMS Just Ignited the Biggest Stars Shake-Up in a Decade,” December 2025.

[9]Oliver Wyman, “How Plans Can Win as Medicare Advantage Star Ratings Change,” 2025.

[10]CAQH, “2025 CAQH Index: U.S. Healthcare Avoided $258 Billion,” February 2026.

[11]CMS, “2026 Star Ratings Fact Sheet,” November 2025.

Jan 30, 2024 • 6 min read

Your UM director just told you the team averaged 8.5 days on standard prior auths last quarter. You nodded, made a note, moved on. In six months, that number becomes a regulatory violation.

For years, health plans have complained about prior authorization burdens: opaque decisioning, variable outcomes, slow turnaround, escalating provider frustration. Half-hearted automation efforts and hybrid analog-digital processes made the problem more visible without solving it.

CMS is now codifying expectations in a way that forces every payer to face reality: the way prior authorization has been done cannot survive 2026.

The changes coming from the CMS Interoperability and Prior Authorization Final Rule aren't incremental technical requirements. They're operational inflection points that will expose long-standing design flaws in prior authorization and utilization management. Leaders who wait until enforcement deadlines will find themselves reacting. Those who act now can redesign the system itself.

Most plans are asking: "What do we have to do to comply with CMS by 2026?" That's a tactical question.

The strategic question is: How do we redesign our prior authorization engine so it performs at the speed, transparency, and explainability levels CMS expects without burning clinical resources, inflating costs, or fragmenting operations?

Checking boxes gets you compliant. Redesigning the system gets you competitive.

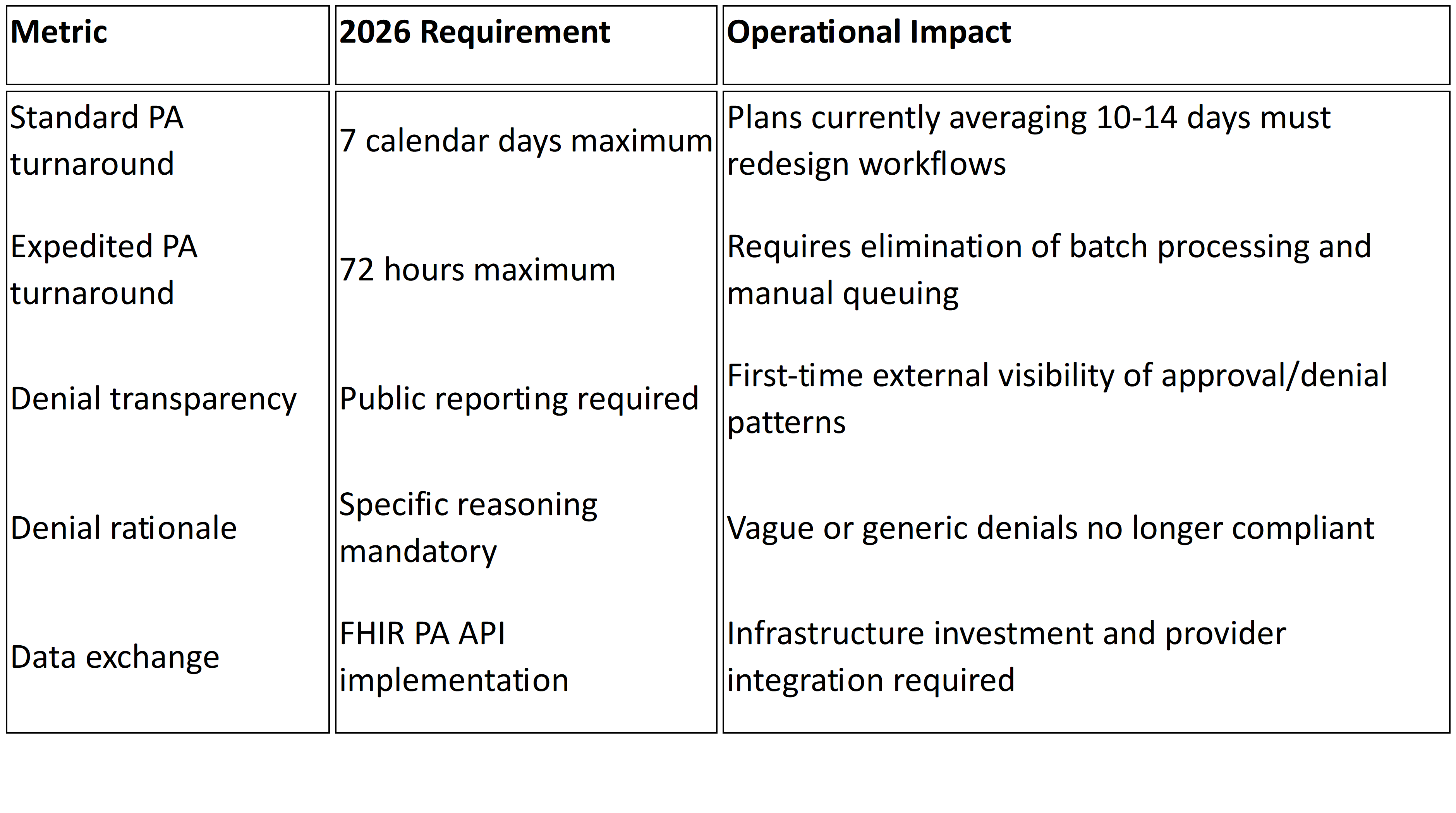

Beginning January 1, 2026, CMS moves prior authorization from operational best practice to regulatory mandate.

Under the Interoperability and Prior Authorization Final Rule (CMS-0057-F), impacted payers including Medicare Advantage, Medicaid managed care organizations, CHIP, and certain Qualified Health Plans must comply with several non-negotiable requirements1:

72-hour turnaround for expedited prior authorization requests

Seven calendar days for standard requests

Specific, actionable denial reasons included with every adverse determination

Public reporting of prior authorization metrics including approval rates, denial rates, and average processing times beginning March 31, 2026.2

FHIR-based APIs to support electronic prior authorization workflows and expanded data access

Here's what this means in practice:

These aren't tweaks to existing workflows. They introduce enforceable timelines, public transparency, and standardized data exchange that most legacy UM environments were never built to support.

The 2026 requirements don't create new operational weaknesses. They expose existing ones.

You Can't Hire Your Way to 72-Hour Compliance

If your prior authorization process depends on manual triage, inconsistent intake validation, or batch review cycles, meeting 72-hourand seven-day mandates becomes structurally challenging. Missed SLAs are no longer internal performance issues. They become regulatory violations.

The constraint is workflow design, not headcount. Adding clinical reviewers may temporarily reduce queue depth, but it doesn't eliminate intake latency, fragmented decision logic, or rework loops that consume days before cases reach clinical evaluation.

Your Denial Rates Are About to Become Public

For the first time, denial rates and processing times will be publicly reported beginning March 31, 2026.2

Plans with high denial rates, particularly those with elevated appeal overturn percentages, will face scrutiny from regulators, providers, and beneficiaries. Appeal overturn rates that were previously internal quality metrics become public signals about determination consistency.

Denials frequently reversed on appeal start looking less like utilization management discipline and more like systematic dysfunction.

Unstructured Intake Creates SLA Risk

Any workflow relying on fax, email attachments, or unstructured documentation creates intake uncertainty. Under 2026 mandates, that uncertainty translates directly into SLA exposure. What was operational inconvenience becomes regulatory vulnerability.

When requests arrive incomplete or in unstructured formats, the clock has already started but clinical review cannot. Days get consumed in follow-up and clarification before actual determination work begins.

Policy Fragmentation Becomes Audit Risk

Medical policies in PDFs. Coverage criteria configured separately in UM systems. Benefit rules embedded in claims engines.

When these layers diverge, denial rationale becomes inconsistent. Inconsistent rationale fuels appeals. Appeal patterns become public metrics tracked by CMS and visible to your provider network.

The 2026 rule requires "a specific reason for adenial"1 in a manner that allows providers to understand what additional information or clinical criteria would result in approval. Fragmented policy governance makes this level of specificity difficult to maintain consistently across thousands of determinations.

API Implementation Without Operational Alignment Fails

FHIR-based Prior Authorization APIs are mandated under the final rule1, but successful implementation requires more than technical connectivity.

These APIs demand structured, standardized data; clear mapping of coverage rules; real-time status tracking; and determination traceability. Treating API implementation as a technical bolt-on without aligning internal policy logic and workflow orchestration creates compliance on paper but operational brittleness in practice.

Reporting Infrastructure Will Strain Multiple Teams

Public reporting requires consolidated, accurate, reconcilable data. The rule requires payers to publicly report metrics including prior authorization decisions, denial reasons, and turnaround times2.

Most plans currently track these metrics across multiple systems: intake portals, UM platforms, claims engines. Without centralized reporting architecture, compliance becomes a manual reconciliation exercise rather than an automated output.

What Forward-Thinking Plans Are Doing Differently

The plans that will meet and leverage the 2026 expectations approach the problem differently.

They Treat Prior Authorization as a System, Not a Function

Rather than thinking in terms of "PA teams" or "PA tech stacks," they define a unified decision pipeline: intake →policy → decision → evidence → reporting. Every component must be architected for speed, traceability, and defensibility.

They Engineer Intake for Decision Readiness

Systems that treat intake as a validation and structuring event, not just data capture, dramatically reduce downstream review time. When requests arrive complete and structured, decisions get smarter and faster.

If a significant portion of requests require follow-up for missing clinical documentation, days burn before clinical review even starts. Fixing intake fixes throughput.

They Govern Policy and Logic Centrally

If policy resides in PDFs, disparate tools, and tribal knowledge, automation fails. Aligning policy logic, configuration, and deployment is the prerequisite for defensible, explainable decisions that meet CMS transparency expectations.

Centralized policy governance ensures reviewers apply consistent standards across all determinations, directly impacting appeal rates and public reporting metrics.

They Accelerate FHIR API Adoption Strategically

Forward-leaning plans are adopting FHIR Prior Authorization APIs now, enabling electronic request and response, reducing provider friction, and establishing a foundation for real-time decisioning rather than batch processing.

This isn't just compliance theater. It's infrastructure for the next decade of utilization management.

Most organizations' instinctive reaction to tighter SLAs is staffing expansion. Consider what that investment looks like:

Clinical hiring: Expanding nurse reviewer teams to handle faster turnaround requirements

Reporting resources: Staff to reconcile metrics across systems for public reporting compliance

API implementation: Technical infrastructure for FHIRPA API deployment and provider integration

Policy governance: Often unfunded, leading to continued fragmentation and appeal exposure

The alternative is investing in redesigning the decision pipeline itself. Structured intake, centralized policy logic, and automated workflow orchestration reduce review burden while improving consistency. The ROI isn't just compliance. It's operational leverage.

If you're treating this as a compliance checklist, you're already behind. This is a fundamental redesign of how utilization management operates.

By Q2 2025: Audit your current PA workflow end-to-end. Identify where time gets consumed: intake validation, clinical review queues, policy lookup, documentation rework, peer-to-peer scheduling. Measure your actual turnaround distribution, not averages.

By Q3 2025: Centralize policy governance. Map coverage criteria to decision logic. Ensure clinical reviewers are applying consistent standards that can withstand public scrutiny and audit review.

By Q4 2025: Implement structured intake that validates completeness before requests enter clinical queues. Stand up reporting infrastructure that consolidates metrics in real time.

By Q1 2026: Conduct dry runs of public reporting. Simulate 72-hour expedited workflows under peak volume. Validate FHIR API functionality with key provider groups.

The plans that redesign now won't just comply. They'll operate with structural advantage.

We built Smart Auth after years of working inside health plan operations, seeing firsthand where prior authorization workflows breakdown. It's designed to make prior authorization decision-ready from intake through policy application and final determination.

Smart Auth structures data at intake, aligns policy logic centrally, and supports the traceability required for timely decisions and transparent reporting. It enables defensible, explainable determinations at the speed CMS expects without requiring massive clinical hiring or fragmented point solutions.

In 2026, prior authorization performance won't be judged internally. It will be measured, reported, and compared publicly. The question isn't whether to redesign. It's whether you start now or spend 2026 firefighting compliance gaps while your metrics become part of the public record.

Jan 30, 2024 • 6 min read

Physicians and their staff complete an average of 39 prior authorization requests per week. They spend roughly 13 hours processing them.¹ When requests get denied, more than 80% of those denials are partially or fully overturned on appeal, meaning the care was appropriate all along.²

That is not a utilization management program working as intended. That is a system generating unnecessary friction, burning clinical resources, and producing decisions it cannot defend.

Auto approvals were supposed to fix this. Route the obvious cases through automatically. Free up clinical reviewers for complex decisions. Cut turnaround times. Reduce provider abrasion.

Most health plans tried some version of this approach. Few got the results they expected.

The math behind auto approvals is straightforward. If a significant share of prior authorization requests are routine, policy aligned, and destined for approval anyway, why route them through manual clinical review?

The problem is execution. In a recent KFF analysis of Medicare Advantage data, denial rates ranged from 4.2% at Elevance Health to 12.8% at UnitedHealth Group.² Those rates might seem low. But when over 80% of denied requests are overturned on appeal, the real story becomes clear: plans are denying care they will ultimately approve, just with extra steps, extra cost, and extra delay.

According to the CAQH Index, only 35% of medical prior authorizations are conducted fully electronically.³ Manual prior authorization transactions cost providers $10.97 each. Fully electronic ones cost $5.79, roughly half. For payers, the gap is even wider: $3.52 per manual transaction versus five cents for a fully electronic one.⁴

Auto approvals should be eating into these costs. For most plans, they are not.

The failure mode looks the same everywhere. A health plan bolts an auto approval layer onto a prior authorization workflow that was designed for manual review. Every request enters the same intake funnel. Data arrives incomplete or inconsistently structured. Clinical documentation lands as bulk attachments, hundreds of pages of chart notes that no automation can parse reliably.

Under those conditions, auto approval rules get conservative fast. Exceptions multiply. Edge cases pile up. The system cannot distinguish between a straightforward imaging request that matches policy criteria and a complex surgical case requiring genuine clinical judgment. So it sends both to manual review, because it cannot trust its own inputs.

The result: auto approvals exist on paper but barely dent the queue. Reviewers still touch most cases. And the 40% of physician practices that now employ staff exclusively to handle prior authorization paperwork¹ see no relief.

Three specific blockers keep this pattern locked in place.

Intake is broken. Requests arrive via fax, portal, phone, and EDI, often missing required fields. When the system cannot confirm a request is complete, it cannot auto approve it. We wrote about this problem in detail in our piece on modernizing UM intake. The front end is where most prior authorization delay actually begins.

Policy logic is fragmented. Medical policies live in PDFs. Clinical criteria are configured in the UM platform. Benefit rules sit in the claims system. No single source of truth exists for “should this request be approved?” When three systems disagree, the default answer is always manual review.

Nobody owns the auto approval rate. UM owns clinical appropriateness. IT owns the platform. Compliance owns regulatory exposure. No single executive is accountable for the percentage of requests that bypass manual review, so nobody optimizes for it.

The health plans getting auto approvals right are not buying better automation. They are fixing the preconditions that make automation possible.

That means restructuring intake so requests arrive complete and policy aligned before any decision logic runs. It means centralizing medical policy so criteria are applied consistently, not interpreted differently by different reviewers on different shifts. And it means surfacing clinical evidence in context: extracting the three data points that matter for a routine imaging request, rather than dumping a 200 page chart into a reviewer’s queue.

This is the approach behind Mizzeto’s Smart Auth. Instead of asking “can this request be auto approved?” Smart Auth asks “is this request decision ready?” That distinction matters. A decision ready request has complete data, matches a known policy pathway, and meets explicit criteria thresholds, so the system approves it with confidence, not with crossed fingers.

CMS is pushing the entire industry in this direction. Under the Interoperability and Prior Authorization Final Rule (CMS 0057 F), impacted payers must respond to standard prior authorization requests within seven calendar days and expedited requests within 72 hours, effective January 1, 2026.⁵ Plans must also publicly report prior authorization metrics, including approval rates, denial rates, and average turnaround times, beginning March 31, 2026.⁵

Those timelines are not aspirational. They are regulatory. And plans that still route 65% of prior authorization through manual or partially manual channels³ will not meet them by hiring more reviewers. The only realistic path is systematic auto approval of decision ready requests, which means fixing intake, policy logic, and data quality first. We laid out the full compliance timeline in The Countdown to 72/7.

The HL7 Da Vinci FHIR Implementation Guides (CRD, DTR, and PAS) provide the technical scaffolding for this shift, enabling real time coverage requirement discovery and electronic prior authorization submission.⁶ Plans that invest in FHIR based infrastructure now are not just meeting a compliance deadline. They are building the foundation for auto approvals that actually scale.

If your auto approval rate is stagnant, the problem is not your approval logic. It is everything upstream: incomplete intake, fragmented policy, and data your system cannot trust.

Start by measuring what percentage of prior authorization requests arrive decision ready. Complete, structured, and policy aligned before they hit clinical review. That number is your ceiling for sustainable auto approvals. Everything you do to raise it directly reduces manual review volume, improves turnaround performance, and positions your plan for CMS 0057 F compliance.

At Mizzeto, Smart Auth was designed to close exactly this gap. Not by approving more requests automatically, but by ensuring the right requests never need manual review in the first place.

If auto approvals exist in your organization but still feel fragile, that fragility is the signal. Let’s talk about it.

¹ American Medical Association, “2024 AMA Prior Authorization Physician Survey,” 2024. ama-assn.org

² KFF, “Medicare Advantage Insurers Made Nearly 53 Million Prior Authorization Determinations in 2024,” January 2025. kff.org

³ CAQH, “Priority Topics: Prior Authorization,” 2024. caqh.org

⁴ 4sight Health, “The Costly Lever of Prior Authorization” (citing 2023 CAQH Index data), February 2024. 4sighthealth.com

⁵ Centers for Medicare & Medicaid Services, “CMS Interoperability and Prior Authorization Final Rule (CMS 0057 F),” January 17, 2024. cms.gov

⁶ CAQH CORE, “Navigating the CMS 0057 Final Rule: A Guide for Implementing Prior Authorization Requirements,” 2024. caqh.org

Jan 30, 2024 • 6 min read