On April 2, 2026, CMS finalized the Contract Year 2027 Medicare Advantage and Part D rule1, and the changes will reshape how health plans earn, protect, and lose revenue for years to come. CMS finalized the removal or retirement of 11 Star Ratings measures, declined to implement the Health Equity Index reward, added a new Depression Screening and Follow-Up measure, codified the Inflation Reduction Act's Part D benefit redesign, tightened supplemental benefit oversight, updated call recording retention requirements, and scaled back several health equity provisions. The agency received over 42,000 public comments on the proposed rule.2

The financial stakes are significant. According to CMS projections published in the final rule, the Star Ratings changes are estimated to have a net impact of approximately $18.6 billion on the Medicare Trust Fund over the 2027 to 2036 period.3 Independent actuarial estimates, including analyses from Milliman and Wakely Consulting, suggest roughly 25% of contracts could lose half a Star, with at least 42 contracts potentially falling below the 4.0 threshold that determines quality bonus payments.4 Industry analyses from Press Ganey and others estimate that by 2029, CAHPS and HOS survey measures could account for nearly 40% of total Star weight5, meaning the administrative measures that padded most plans' ratings are gone, and member experience is now the primary financial driver.

What CMS Changed: The Complete Picture

The rule touches nearly every operational function in a health plan. The Star Ratings overhaul is the headline: CMS finalized the removal or retirement of 11 measures, many of which were considered topped-out administrative or process measures with little performance variation across plans. CMS retained the Diabetes Care Eye Exam measure after comment period feedback, and added a new Depression Screening and Follow-Up measure for the 2027 measurement year (reflected in 2029 Stars). CMS also declined to implement the Health Equity Index reward, retaining the historical reward factor instead.

Beyond Stars, CMS codified the IRA's Part D benefit redesign into permanent regulation: the coverage gap is eliminated, a $2,000 annual out-of-pocket cap is in place, and catastrophic phase cost sharing is zero. CMS strengthened supplemental benefit oversight, including requiring that debit cards used for SSBCI be electronically linked to plan-covered items through an identification mechanism at the point of sale. CMS also updated call recording retention requirements, reducing the overall retention period for marketing and sales calls from 10 to six years with a tiered structure. Separately, documentation supporting coverage determinations must be retained in original format, including audio files; CMS has indicated that failure to produce original-format documentation may result in adverse audit findings, including potential PDE record adjustments.6 CMS also loosened marketing rules (eliminating the 48-hour SOA waiting period and the 12-hour gap between educational and marketing events), scaled back or deferred several health equity provisions for QI programs and UM Committees, and rescinded the mid-year supplemental benefit notice mandate.

Table 1: CY2027 Star Ratings Measure Changes

Operational Impact: What This Means for Member Services and Payer Operations

CAHPS Now Drives the Revenue Equation

With industry estimates projecting survey measures could approach 40% of total Star weight by 2029, every member interaction that feeds a CAHPS response carries direct financial consequence. CAHPS measures getting needed care, getting appointments quickly, customer service quality, and health plan information. Each maps directly to call center performance: how quickly a member reaches a knowledgeable agent, whether the issue was resolved on the first call, and whether the member felt the plan gave them the information they needed.

Plans that treated member experience as secondary to clinical gap closure need to reverse that calculus. The AHA raised concerns during the comment period that CAHPS is high-level and lagged7, which underscores the operational problem: by the time CAHPS data reveals an issue, the damage spans months of interactions. Plans need real-time quality intelligence, not annual survey results, to manage at the speed CMS now requires.

The Language Access Measure Is Gone. The Requirement Is Not.

CMS removed the Call Center Foreign Language Interpreter and TTY Availability measure from Stars, effective 2028. But CMS will continue enforcing language access through compliance mechanisms, and member experience with language access will be captured through CAHPS survey questions.8 When language access was a binary, pass-fail administrative measure, plans met the standard by having an interpreter line available. Now it is measured through member experience surveys. The bar shifts from availability to quality. Did the Spanish-speaking member feel heard? Was the Mandarin-speaking member's question actually resolved? Multilingual quality monitoring becomes more important under this rule, not less.

Complaints, Retention, and the Signals You Are About to Lose

The Complaints about the Health Plan and Drug Plan measures are both removed, as is Members Choosing to Leave the Plan. Plans used these as governance signals for grievance operations and retention. Their removal from Stars does not mean CMS stops watching; these will likely continue as display measures and compliance enforcement tools. Complaint trends remain among the strongest leading indicators of CAHPS deterioration. And every lost member still represents lost premium revenue. The difference now is that plans lose the early warning signals. The QA system that monitors member interactions must compensate by surfacing complaint trends and churn risk from interaction data, feeding quality insights directly into retention strategy.

Depression Screening Creates a Member Services Coordination Challenge

The new Depression Screening and Follow-Up measure evaluates two rates: the percentage of eligible members screened, and the percentage who receive follow-up care within 30 days of a positive screen. The screening rate depends on clinical workflows. The follow-up rate depends on member services infrastructure: outreach, appointment scheduling, and confirmation. Plans that silo this as a purely clinical initiative will underperform.

Part D Benefit Changes Will Hit the Phones

The codified three-phase benefit structure (deductible, initial coverage, catastrophic) replaces the four-phase model members have known for years. The $2,000 out-of-pocket cap is the most significant Part D financial protection in a generation, but it requires agents to explain new cost-sharing mechanics accurately. Members will call about why their coverage gap disappeared, what counts toward TrOOP, and what happens at the OOP threshold. Every call center needs updated knowledge base content, retrained agents, and revised IVR scripts before October 2026. Benefit misinformation during AEP is one of the fastest paths to CAHPS degradation.

Debit Card Declines Will Become Call Volume

CMS strengthened SSBCI debit card requirements, including that cards be electronically linked to plan-covered items through an identification mechanism at the point of sale. In practice, this means tighter verification when members use flex cards. When a member's card is declined at a store because a specific item does not qualify, the next action is a phone call. Member services teams should anticipate a new category of inbound inquiries. Plans that do not prepare agent scripts and escalation workflows will see resolution times spike and CAHPS-relevant frustration increase.

Appeals Measures Gone: BPO Accountability Gap Widens

Two appeals measures are removed: Plan Makes Timely Decisions about Appeals and Reviewing Appeals Decisions. For plans outsourcing appeals to BPO vendors, this eliminates one of the few externally visible accountability signals on that process. Plans must build internal SLA monitoring to ensure outsourced operations maintain standards. The risk is not a Star Rating drop; it is a CMS compliance finding.

Health Equity Provisions Scaled Back: The Mandate Is Gone. CAHPS Is Not.

CMS scaled back or deferred several health equity provisions in this rule: the HEI reward was not implemented, QI program disparity reduction requirements were removed, UM Committee equity expert and analysis mandates were eliminated, and the supplemental benefit notice was rescinded. For plans serving significant dual-eligible or LEP populations, the regulatory pressure is reduced but the operational reality is unchanged. Experience disparities still surface in CAHPS. Voluntarily maintaining equity-focused quality monitoring, particularly multilingual QA, is not compliance theater. It is CAHPS protection.

Operational Impact Matrix: Every Change, Every Action

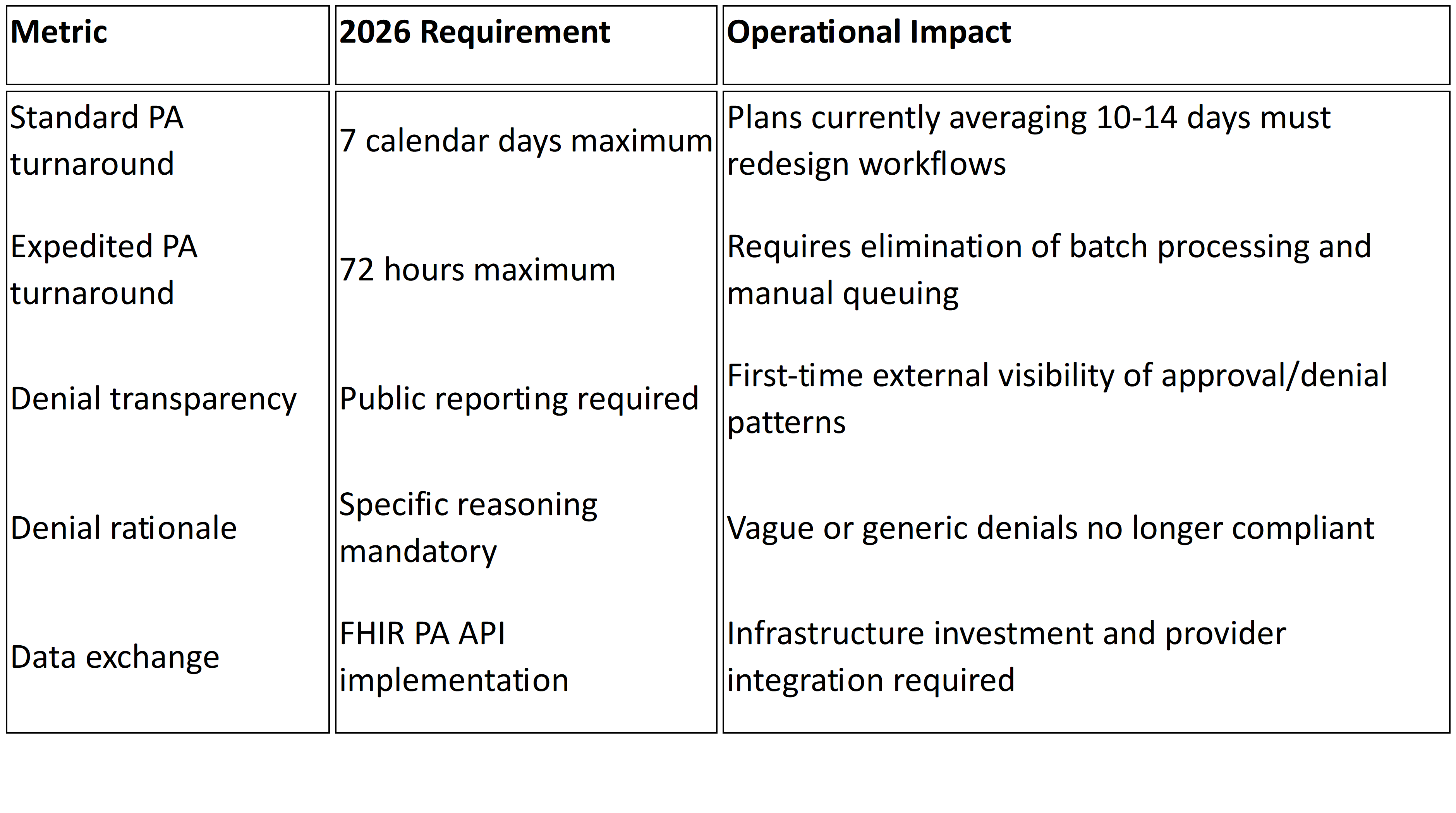

Table 2: CY2027 Final Rule Operational Impact Matrix

What to Look for in a Member Experience Quality Solution

When evaluating solutions, health plan leaders should look for these capabilities:

100% interaction monitoring, not sampling. With CAHPS and survey measures carrying increasingly dominant weight in Star Ratings, sampling-based QA cannot identify the systemic patterns that drive survey responses.

Multilingual quality scoring at native-language fidelity. With language access measurement shifting to CAHPS, multilingual QA must be integrated into the same framework applied to English interactions.

Real-time coaching signals. CAHPS is lagged. Quality intelligence must surface coaching opportunities within hours, not quarters.

CAHPS-aligned scoring frameworks. The QA scorecard must mirror what CMS measures: getting needed care, customer service, getting appointments, and health plan information.

Complaint and churn early warning. With complaint and disenrollment measures removed, the QA platform must surface these signals from interaction data.

Plan-owned data and analytics. If your quality data lives inside a vendor's platform, you do not own your operational intelligence. That intelligence must belong to the plan.

How Mizzeto Supports This Shift

Mizzeto's Multilingual QA Solution was built for this inflection point: AI-powered quality monitoring across 100% of member interactions, in multiple languages, with CAHPS-aligned scoring, real-time coaching signals, and complaint and churn analytics. All data stays in the plan's hands. For more on connecting these capabilities to call center performance, see our guides on improving call center performance and modernizing call center operations.

The Window Is Open. Here Is How Long You Have.

The rule is effective June 1, 2026. Marketing begins October 1. Coverage starts January 1, 2027. The 2027 measurement year, which feeds 2029 Star Ratings, will be the first scored under the new CAHPS-heavy measure set. Plans that retool now have time. Plans that wait for 2029 ratings to reveal a problem will discover it started in 2027.

References

1. CMS, 'Contract Year 2027 Medicare Advantage and Part D Final Rule' Fact Sheet, April 2, 2026.

2. Federal Register, CMS-4208-F3/CMS-4212-F, published April 6, 2026.

3. CMS Final Rule financial projections; Becker's Hospital Review, April 2, 2026.

4. Milliman, 'Falling Star Rating Trajectory,' December 2025. Healthcare Labyrinth, April 2026.

5. Press Ganey, December 2025. Upward Growth, April 2026. Healthcare Labyrinth corroborates.

6. AArete, 'Reading the Signals,' December 2025.

7. American Hospital Association, Comment Letter on CY 2027 Proposed Rule, January 26, 2026.

8. Holland & Knight, April 2026. Crowell & Moring, December 2025.